How drones, AI & Open Source Software can be used to combat Alien Invasive Plants in South Africa

Alien Invasive Plants (AIP) have become a major threat to South Africa’s sensitive Fynbos biome. In 2017 and 2018, fires in the Western Cape region killed 8 people and destroyed over 2000 homes and devastated biodiversity in the region. The intensity of these fires was amplified by the massive amounts of AIP, in particular Black Wattle (Acacia mearnsii), Gum (Eucalyptus sp.) and Pine (Pinus sp.) that have gone unchecked and uncontrolled for decades. Now in 2025, the problem is even more pronounced and current methods of monitoring and clearing AIP are very inefficient, very time consuming and very costly.

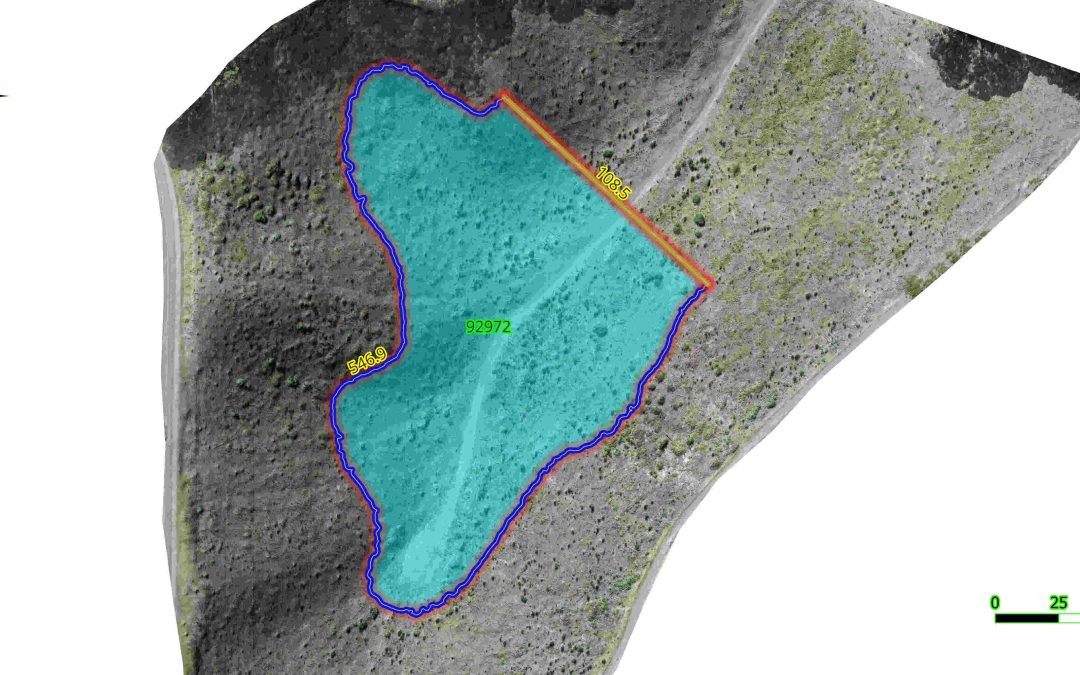

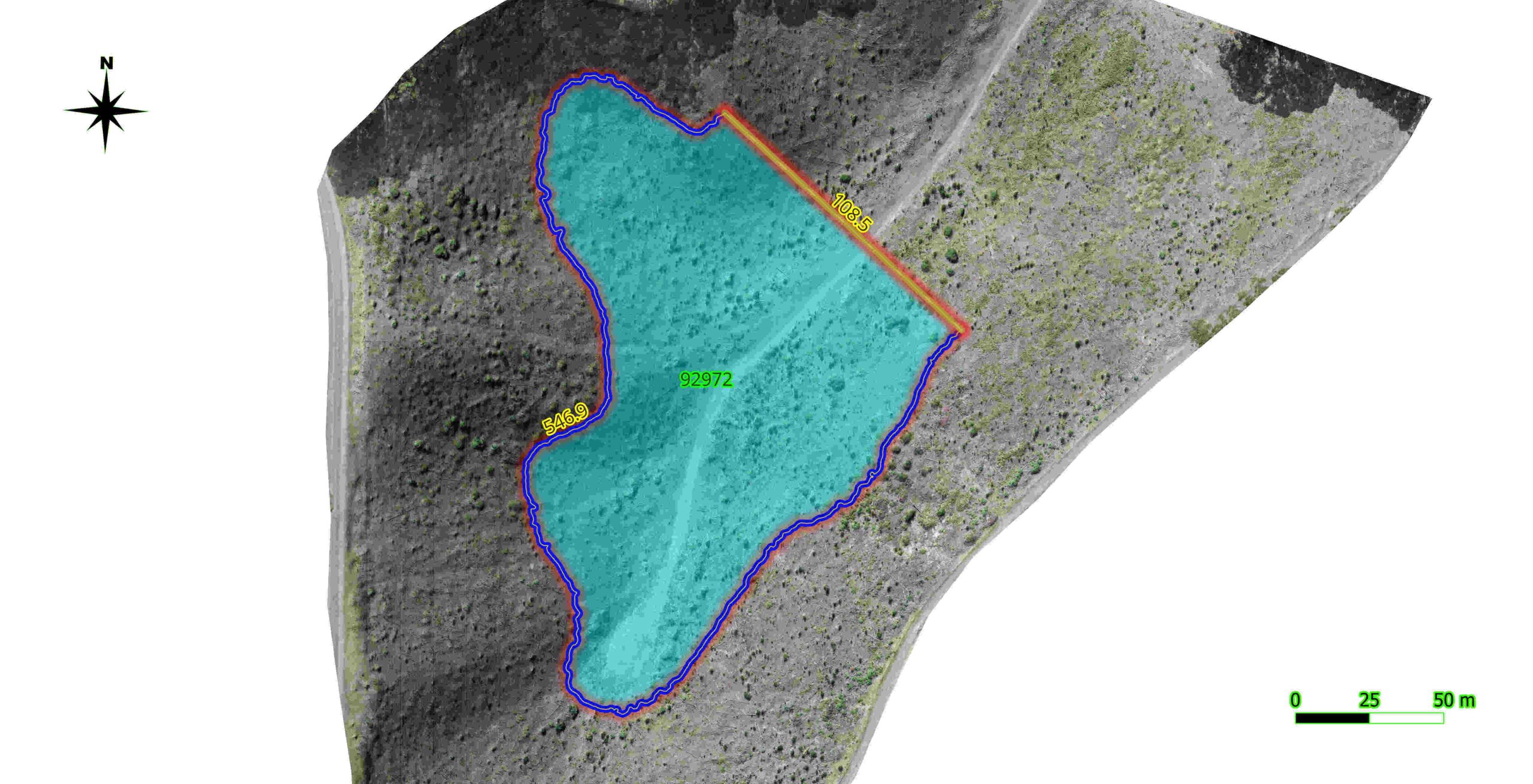

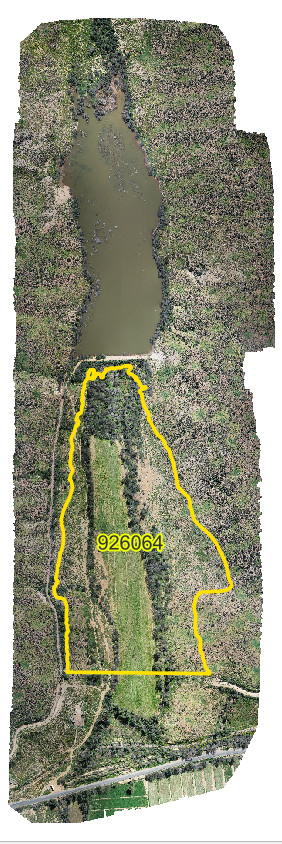

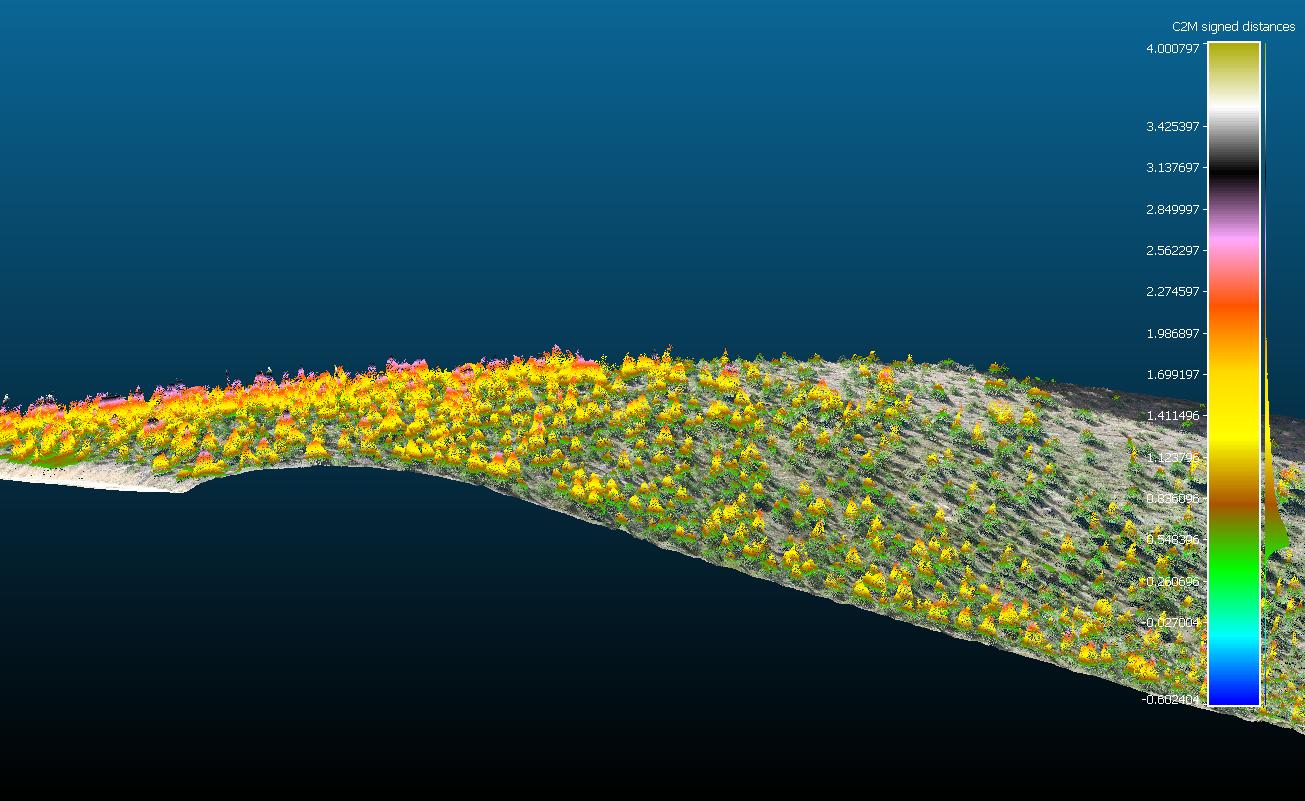

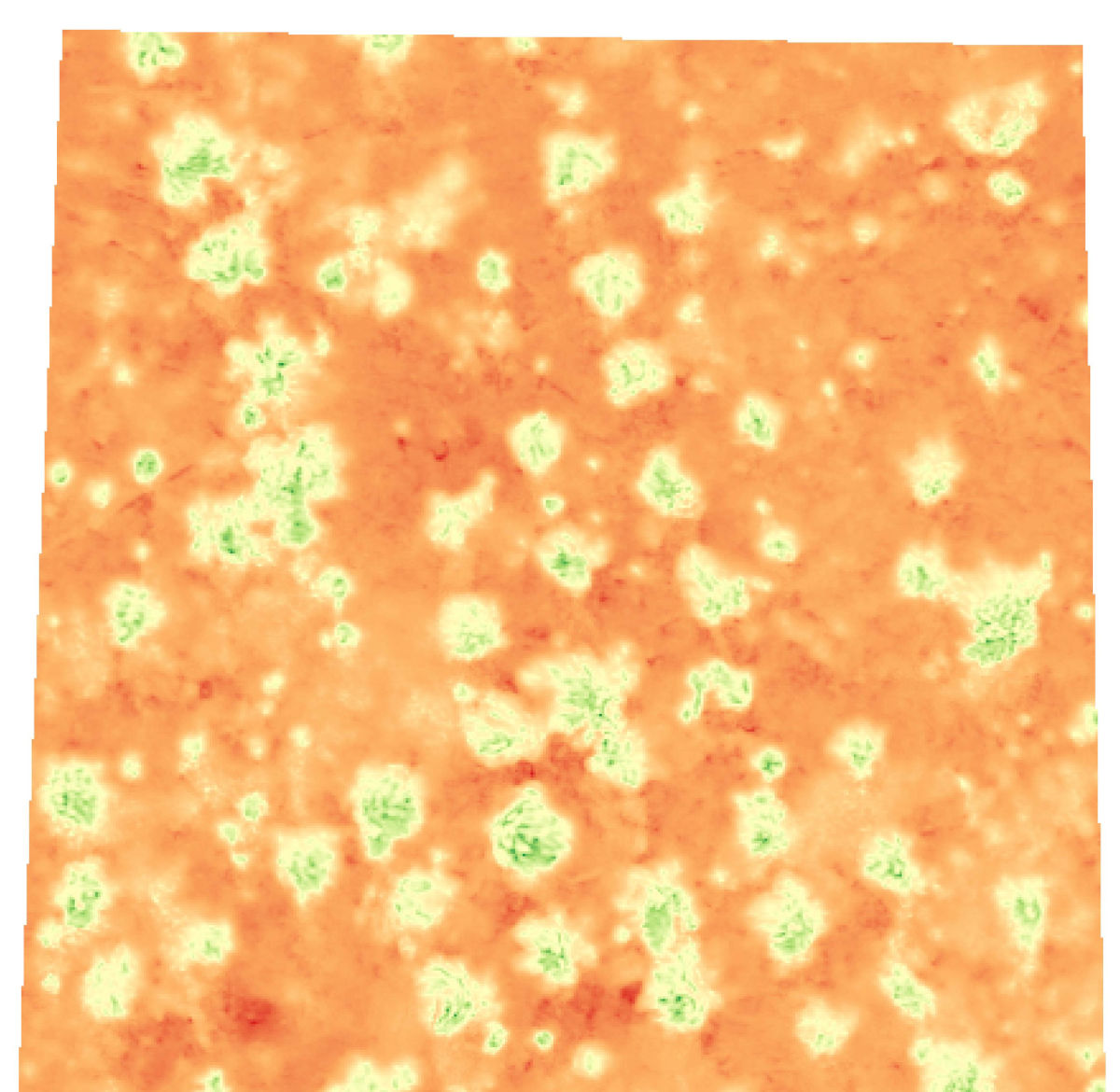

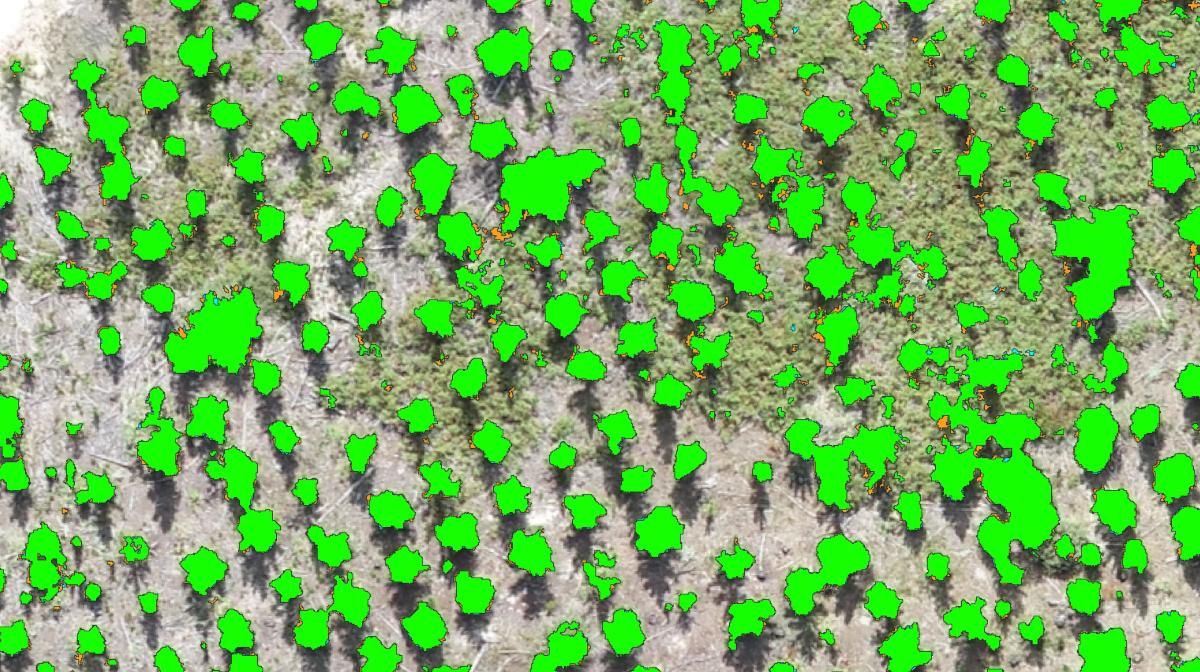

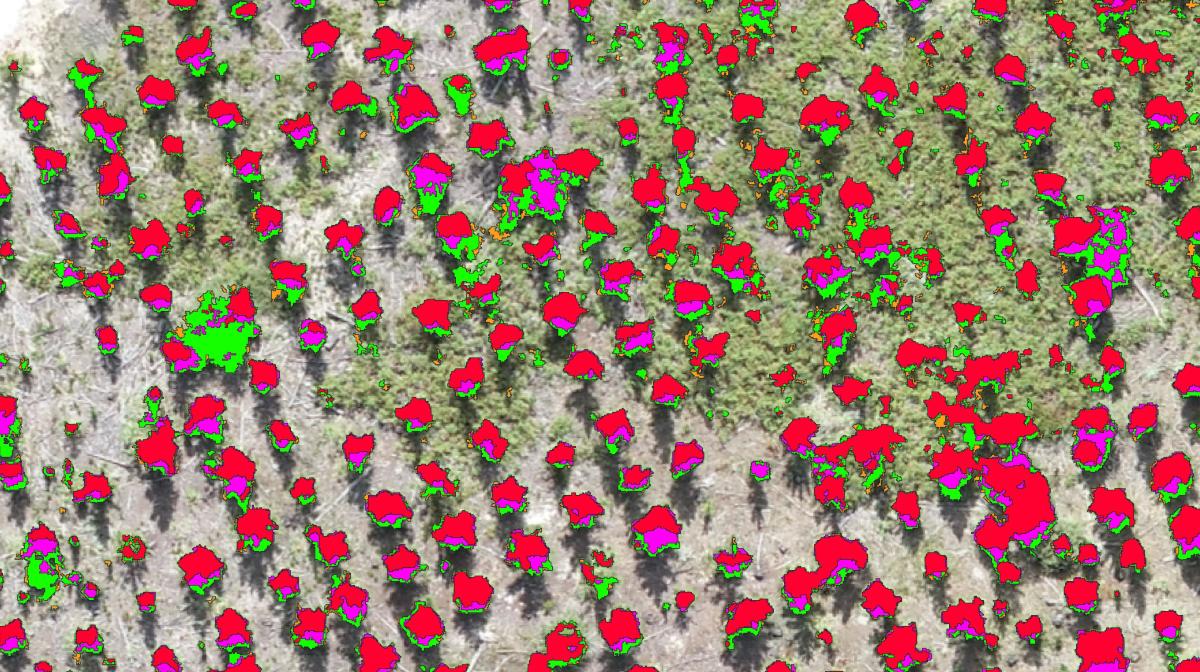

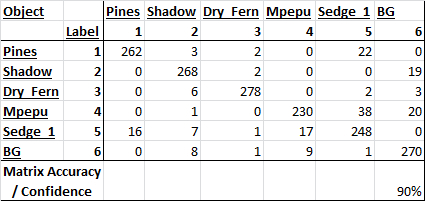

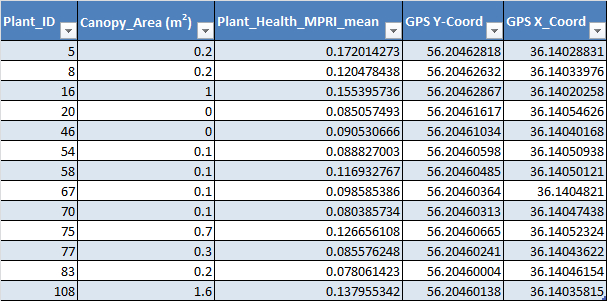

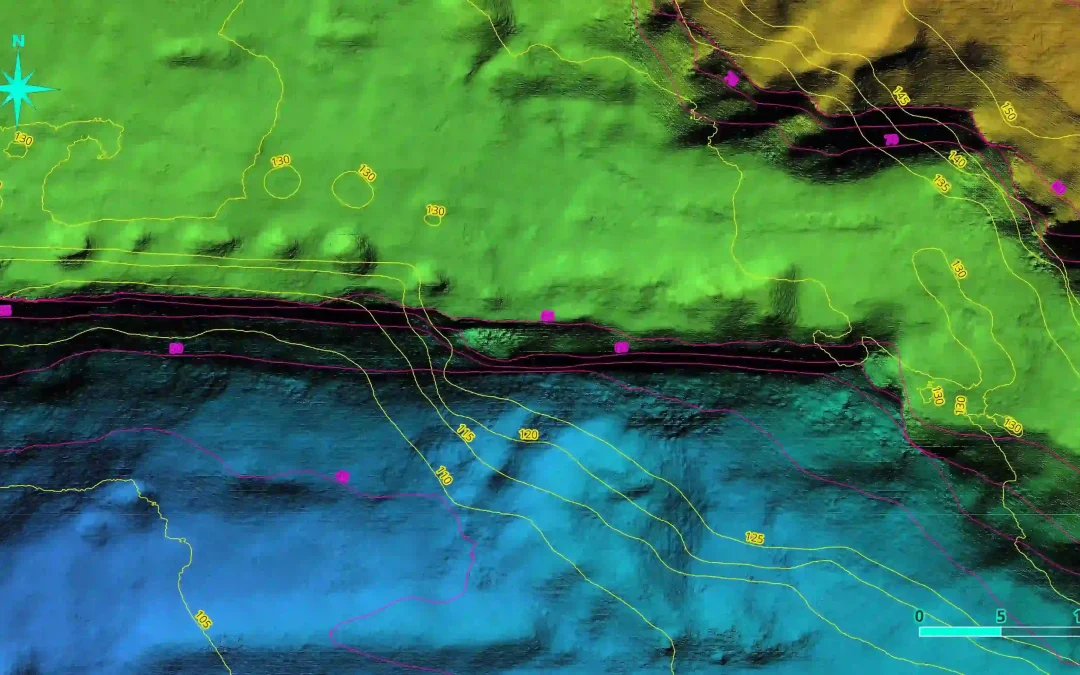

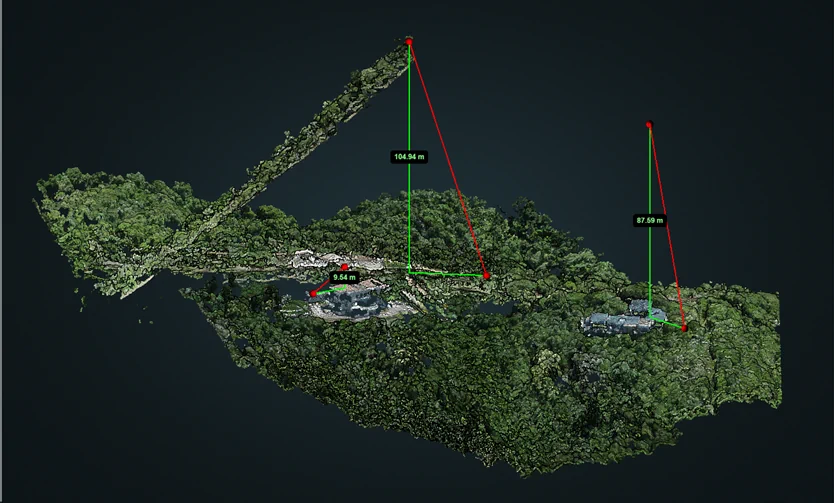

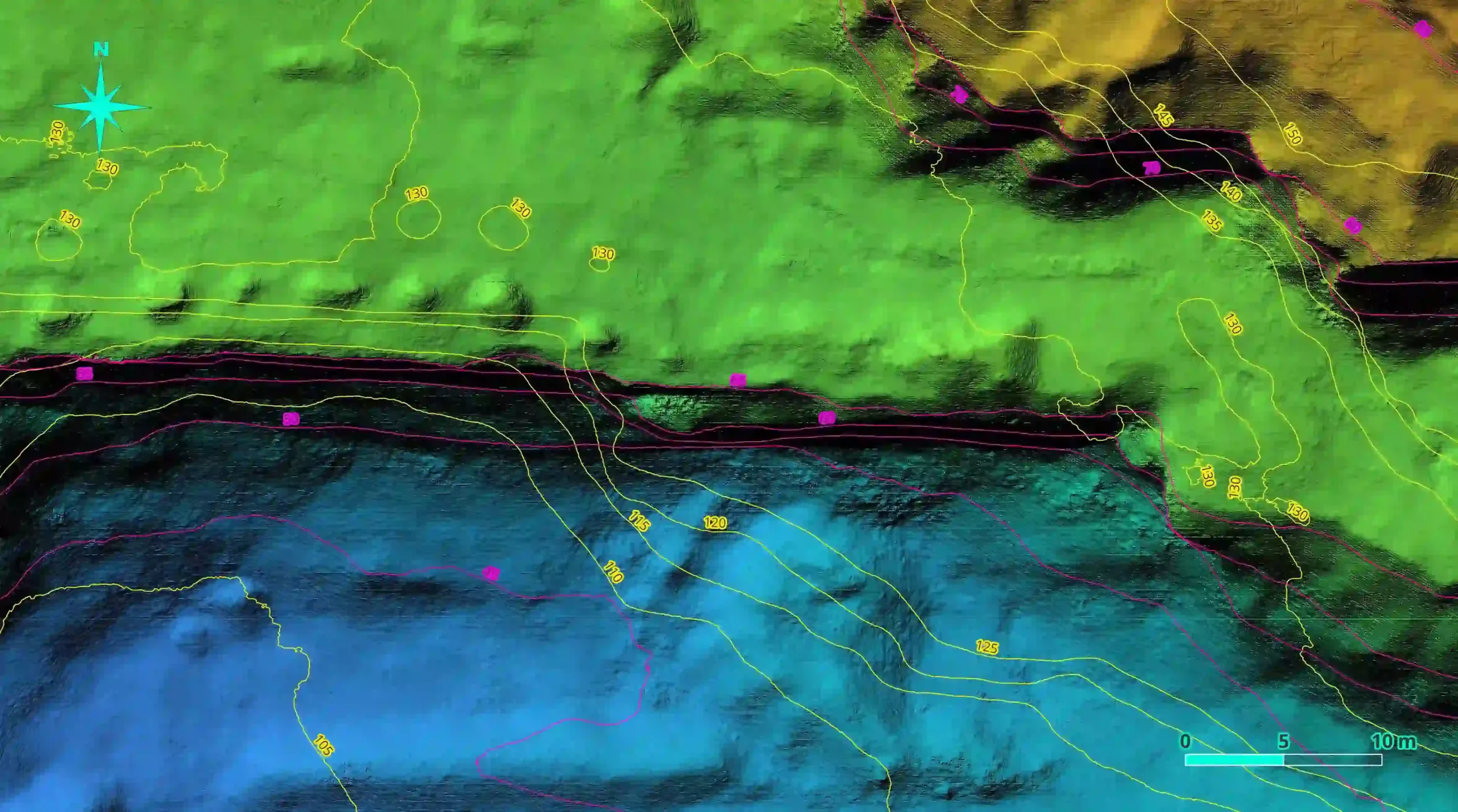

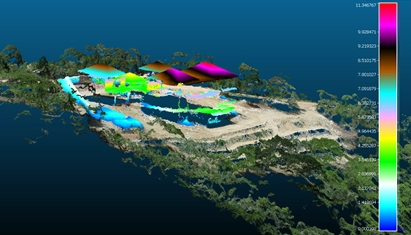

Drone technology and open source software can be used to map, locate, classify age, determine ease of access, determine urgency for clearing, define burn intensity and then plan removal projects based on this information. Drones can also be used to clear AIP by using precision spray methods to kill off very dense stands. This coupled with ground based removals will drastically improve current methods and we may actually have a small chance of regaining the biodiversity lost.

This presentation was hosted by the Save Wild Project. For more information have a look at the presentation given to Western Cape municipality, government and communities on the subject of tech based applications for UAVs and AIP control here: